Trust, Not Technology, Will Decide the Future of Healthcare AI

By Richie Splitt | April 2026

AI is already doing remarkable things in healthcare. It flags a deteriorating patient hours before a clinician sees the signs. It detects a fracture or nodule on a radiology scan that a fatigued eye might miss. It gives a physician three hours back in their week by handling documentation. It catches billing errors that might otherwise cost a health system important dollars earned for care delivery. None of that is hypothetical anymore — it’s operational, and it’s growing fast.

Research indicates that by 2026, an estimated 75% of U.S. health systems will use at least one AI application ( Fierce Healthcare ). Clinical documentation and ambient listening tools alone are projected to reach 70–80% adoption by 2027 ( Health Leaders Media ). Domain-specific AI deployment has surged sevenfold since 2024 ( Forbes ). The technology is proving itself. The question now is whether we build the trust infrastructure to match.

Because here’s what I’ve come to believe: AI’s ceiling in healthcare isn’t technical. It’s relational. The organizations that unlock the most value from AI aren’t the ones with the best tools or widgets — they’re the ones where patients and clinicians genuinely trust how those models are used.

The Trust Problem Is Getting Worse, Not Better

That conviction isn’t just instinct — the data backs it up, and the trend line is concerning. A January 2026 national survey from The Ohio State University Wexner Medical Center found that only 42% of Americans are open to AI being used in their healthcare, down from 52% when the same survey ran in 2024. The share who believe AI can make health processes more efficient also fell, from 64% to 55% ( Ohio State Wexner Medical Center / SSRS, January 2026 ).

At the same time, patients aren’t waiting for providers to guide them. A KFF tracking poll from February–March 2026 found that roughly one in three U.S. adults (32%) are already turning to AI chatbots for health information and advice ( KFF Tracking Poll on Health Information and Trust, March 2026 ).

Think about what those two data points mean together: patients are increasingly using AI on their own while simultaneously trusting it less in clinical settings. That gap, between adoption and confidence, is exactly where trust needs to be built.

Trust Is the Multiplier

Trust in healthcare has always meant something specific: a patient’s confidence that their clinician and institution act with competence, integrity, and genuine concern, especially when the patient is vulnerable and information-poor. That trust is what makes open communication, informed consent, and strong outcomes possible. It’s not a soft metric. It’s the foundation.

AI doesn’t replace that dynamic; it extends it. When a physician uses a predictive tool to intervene earlier, when a care team uses AI-driven insights to close gaps for high-risk patients, when an organization uses ambient documentation to give clinicians more face time with the people in front of them, trust is what makes patients receptive and clinicians confident. Without it, even the best tools sit underused or resisted.

A March 2026 study published in JAMA Network Open makes this concrete. In a collaborative survey of 3,000 U.S. adults presented with AI-assisted diagnosis scenarios, the presence of a clinician increased the probability of a patient choosing that visit by 18.4%. Respondents preferred every form of AI governance — FDA approval, national certification, local hospital certification — over no governance at all. The researchers concluded that AI performance, clinician presence, disclosure of representative training data, and systemic governance were all associated with increased patient trust and acceptance ( Bracic et al., JAMA Network Open, March 2026 ).

People don’t reject AI in care; they reject AI they can’t see, can’t understand, or didn’t know was there. Governance Isn’t Bureaucracy — It’s How Trust Scales This is where I think many organizations have an opportunity — and where healthcare already has a playbook.

Healthcare already knows how to pair powerful tools with strong warnings. Think black box drug labels or sepsis alerts in the EHR. Those aren’t designed to slow progress; they are designed to scale it safely. Is it possible that AI needs the same level of visible, institutional “labeling” if we expect patients and clinicians to trust it?

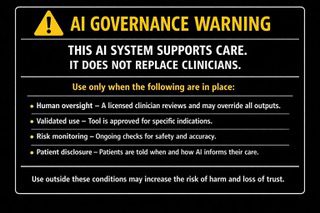

Imagine something like an AI Governance Label — a clear, standardized disclosure that travels with every AI-enabled tool in a care setting, covering human oversight, validated use, risk monitoring, and patient disclosure.

⚠️ AI GOVERNANCE WARNING ⚠️

THIS AI SYSTEM SUPPORTS CARE. IT DOES NOT REPLACE CLINICIANS.

Use only when the following are in place:

Human oversight – A licensed clinician reviews and may override all outputs.

Validated use – Tool is approved for specific indications.

Risk monitoring – Ongoing checks for safety and accuracy.

Patient disclosure – Patients are told when and how AI informs their care.

Use outside these conditions may increase the risk of harm and loss of trust.

That kind of transparency doesn’t slow adoption. It gives clinicians confidence to use the tools and gives patients confidence to trust the care. It moves governance from a back-office function to a visible institutional commitment, and the organizations that get there first don’t just avoid risk, they accelerate adoption, clinician buy-in, and patient engagement.

This Isn’t Theoretical — It’s Already Becoming Law Several states are no longer waiting for organizations to self-govern. Texas enacted the Responsible Artificial Intelligence Governance Act (TRAIGA), effective January 1, 2026, requiring healthcare providers to give patients written disclosure when AI is used in their diagnosis or treatment, before or at the time of the interaction. California’s AI Transparency Act (SB 942), also effective January 2026, requires covered providers to offer tools allowing users to determine whether content was AI-generated ( Manatt Health AI Policy Tracker ; Akerman LLP ).

At the federal level, the White House released a National Policy Framework for Artificial Intelligence on March 20, 2026, outlining legislative recommendations for a unified approach. In healthcare, the framework relies on existing agencies — the FDA, CMS, and ONC — rather than creating a new regulator, and AI policy is expected to evolve through agency action and targeted congressional oversight ( Holland & Knight, March 2026 ).

The direction is clear: disclosure, oversight, and governance are becoming requirements, not options. Organizations that build these practices now are positioning themselves ahead of regulation, not scrambling to catch up.

Shadow AI: The Governance Gap Leaders Can’t Ignore There’s another dimension to this challenge that deserves attention. Experts at Wolters Kluwer have called 2026 “the year of governance,” noting that health system C-suites are playing catch-up to clinicians who have rapidly adopted generative AI applications — often outside institutional oversight.

These users still struggle to identify AI-generated responses that sound authoritative, but are clinically invalid ( Wolters Kluwer, 2026 Healthcare AI Trends ).

A Norton Rose Fulbright analysis from March 2026 reinforces the urgency: AI adoption is outpacing governance in U.S. hospitals, and boards must strive for clear accountability for AI decisions, visibility into where AI is deployed, guardrails before tools are implemented, and ongoing performance monitoring ( Norton Rose Fulbright, March 2026 ).

Shadow AI — clinicians and staff using AI tools the organization hasn’t vetted — isn’t a hypothetical risk. It’s a present one. And it makes the case for visible, institutional governance even stronger. The Opportunity in Front of Us The adoption curves are steep, the ROI stories are real, and the potential to reduce burnout, improve outcomes, and lower costs is enormous. AI is one of the most promising tools healthcare has gained in a generation. But tools only work when the people they serve believe in them and use them appropriately.

The leaders who define this era aren’t the ones who deploy AI the fastest. They’re the ones who pair speed with transparency — who treat trust as a design requirement, not an afterthought. The organizations that get this right find that trust doesn’t slow AI down. It’s the thing that lets AI reach its full potential.

Sources

- Bracic, A., et al. “Factors for Patient Trust and Acceptance of Medical Artificial Intelligence.” JAMA Network Open, March 2026.

- Ohio State University Wexner Medical Center / SSRS. “Public Trust in AI in Health Care Is Slipping, Survey Finds.” April 2026.

- KFF. “Tracking Poll on Health Information and Trust: Use of AI for Health Information and Advice.” March 2026.

- Holland & Knight. “White House Releases a National Policy Framework for Artificial Intelligence.” March 2026.

- Manatt Health. “Health AI Policy Tracker.” 2026.

- Akerman LLP. “New Year, New AI Rules: Healthcare AI Laws Now in Effect.” January 2026.

- Norton Rose Fulbright. “AI Governance in Healthcare: Key Issues for Health System Boards.” March 2026.

- Wolters Kluwer. “2026 Healthcare AI Trends: Insights from Experts.” December 2025.

- American Medical Association. “2026 Physician Survey on Augmented Intelligence.” March 2026.